Cookie notice

This website uses analytical cookies. If that's okay for you, you can click on 'Sure thing'.

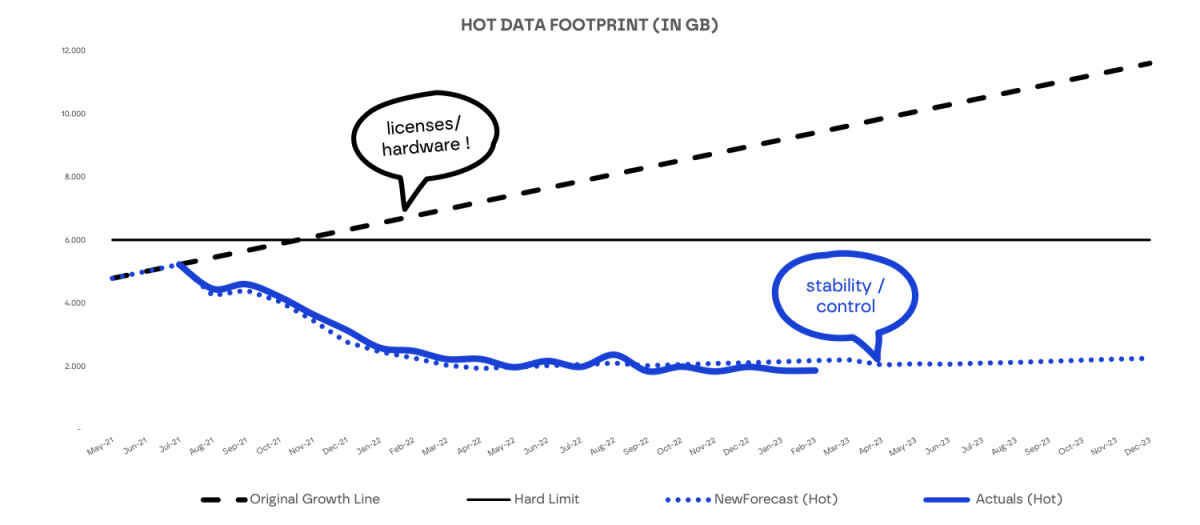

Data is at the heart of the banking system, so efficient (running) systems are vital to their operations. Explore how we helped a bank when natural growth and additional onboarding of datasets resulted in their SAP HANA hot memory being flooded.

ABOUT THE CLIENT

Data volumes were growing, and the SAP HANA hot memory was flooded by increasing data volumes, due to natural growth and the additional onboarding of datasets. As a consequence, processing memory was squeezed and out of memory and intense swapping occurred. Instability loomed, and additional investment in hardware became needed.

Analysis was performed on the read and write patterns of the data processes. A prioritised plan of steps was created, the underlying tables were partitioned and the data processes were pruned so that only the necessary data would be read. Non frequently accessed data was placed in the SAP HANA NSE warm store. Design recommendations were created to be applied to new software. This proactively helps to manage data volume growth.

Optimization, modelling, simplification

Banking

SAP HANA, SAP HANA NSE, Informatica PowerCentre